Law, licensure, and technological innovation

Quick Take

- Law and technological innovation have always been in tension with one another

- Innovation usually comes first, and law follows as a moderating or restricting force

- Software development is — in the broad sweep of a history — a new and developing discipline

- Use of term “software engineer” raises questions about the responsibility and obligations of a party purportedly providing engineering services

- Creation of software for critical infrastructure would benefit from development of formal approval processes that assign responsibility to a final arbiter or arbiters

- Whether or not formal licensure, education and insurance requirements are the right approach remain to be seen

You might think that the title of this essay is a contradiction in terms, but I'd submit to you that law and innovation can be surprisingly hand-in-glove.

Legal change takes time. It usually follows technological innovation instead of preceding it. New technologies arrive before their impact is understood by courts, legislators and lawyers, and lacking an adequate legal framework to guide their development. Knowledge and vocabulary gaps complicate this. Engineers and lawyers speak different technical languages and serve different functions.

The law may need time to adjust, and function properly, as experience guides legislatures and courts. Take the printing press, which made mass production of books possible (and probably led to the development of the novel as a genre). Gutenberg probably didn't consult with a copyright lawyer to consider how the printing press would impact publishers and authors. (If he had, he might have been waved off, so this isn't necessarily a bad thing).

Over time, copyright laws developed to address what was a lesser risk in a pre-printing press world, where books were hand-copied by scribes. Gutenberg's printing press dates to the late 1430s. The Statute of Anne, the first English Copyright law, is dated 1709. If you're Amazon and want to develop drones to deliver products to consumers and think the FAA is slowing you down, it's an irritation – but eventually, you come around.

The same is true with construction and engineering. Safety elevators and tower cranes helped make the modern skyscraper possible. Licensing, inspection and code requirements needed to develop and adjust, as did the government agencies tasked with their safe operation. An interesting historical footnote: elevators didn’t work very well when they were first designed – they didn’t have brakes, so people kept dying. It was only the development of the safety brake that allowed them to be used widely.

There is a reason why people (mostly lawyers) still quote Holmes's "The Common Law," though it may seem formulaic to the point of being trite. This observation is just as on point now as when he wrote these words in 1881:

"The felt necessities of the time, the prevalent moral and political theories, intuitions of public policy, avowed or unconscious, even the prejudices which judges share with their fellow-men, have had a good deal more to do than the syllogism in determining the rules by which men should be governed. The law embodies the story of a nation's development through many centuries, and it cannot be dealt with as if it contained only the axioms and corollaries of a book of mathematics. In order to know what it is, we must know what it has been, and what it tends to become. We must alternately consult history and existing theories of legislation. But the most difficult labor will be to understand the combination of the two into new products at every stage. The substance of the law at any given time pretty nearly corresponds, so far as it goes, with what is then understood to be convenient; but its form and machinery, and the degree to which it is able to work out desired results, depend very much upon its past."

There are many approaches to aligning law and technology, and a solution or complete review of the literature is beyond the scope of this essay. I do like this view, from a piece on military drones that I came across several years ago, where the authors suggest that "two approaches to aligning technology and the law: 1. Finding ways to fit emerging technologies into the law as a program evolves. 2. Expressing the legal and ethical constraints as requirements to drive system design."[1]

This long through-line brings me to what I am pondering today, which is the role that law and licensing should have in software design and development, and the use of the word "engineering" in connection with this.

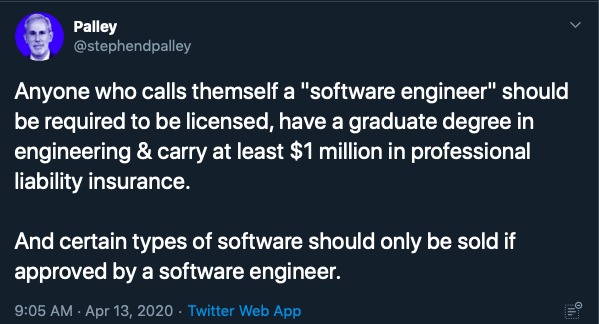

Yesterday, I posted a tweet suggesting that anyone who calls themselves a software engineer should be required to be licensed, educated and insured as such and that certain types of software should be allowed to be sold by such licensees:

This produced what I suppose should have been predictable outrage, in which I was told I was an idiot, that this was the worst take ever, it was an evil position and so forth and so on, etcetera etcetera etcetera.

Look, it's Twitter, and it was intellectual bait, so I am not complaining. The experience also gave me lots of interesting feedback and insights.

There were also plenty of nuanced responses, pointing out the difference between software development and the disciplines of structural, mechanical, and electrical engineering. All of which is true. Several people also suggested that software should be evaluated based on results not on the people who create it. And one or two people I think got my drift, which was more about the meaning of the word "engineer" and the responsibility and legal obligations that in practice come with it – at least in the worlds of construction and engineering with which I am familiar as a lawyer.

So let's stipulate, in case I wasn't clear, that I no more think that software development – writing code – should be subject to a blanket licensure requirement than I think that painting or drawing buildings should require a PE or building department code approval. That is not what I am saying.

What I am saying is that the word engineer – at least for the last, oh, say 150 years or so – means something more than a designer. It connotes responsibility, power and liability. When an engineer approves plans for a building or a bridge, they take on personal and moral responsibility for the design. If something goes wrong, it is on them – they can be sued for professional negligence and, in some cases, even end up being criminally prosecuted.

I am not suggesting that a social media platform or a widget delivery service or game design is either simple to do well or unimportant. Writing and deploying good software is hard! I would say, however, that it may not be the type of software development or construction that requires engineering as I understand the term or, for that matter, licensure. If so, maybe the people who build and design that aren't engineers? I am also not sure why that is a controversial statement, though it may offend someone.

On the other hand, I, for the life of me, do not understand why the design of software to monitor nuclear power plant core temperatures should not be subject to the ultimate approval by someone with professional credentials, licensure, insurance. This seems non-controversial to me. And I would assume that (as with other types of engineering) this would be a self-regulating group, with industry created standards (this is how the bar works, for lawyers).

Indeed, some jurisdictions already require anyone who uses the title "engineer" (software or otherwise) to be licensed as such.

The argument that software is too dynamic and changeable to require any sort of formal approval process (or licensure) also makes no sense to me. If we have developed technology complex enough to land planes or drive cars, we certainly have the capacity to create standards, rules and a liability regime to ensure quality, auditability and responsibility for when things go wrong.

Nor do I buy the argument that this sort of "credentialism" creates an impediment to wealth, innovation or advancement, any more than the licensure of doctors does the same. I am sure that old-school sawbones felt the same, just as scriveners decried the professionalism of law and licensure that came with it.

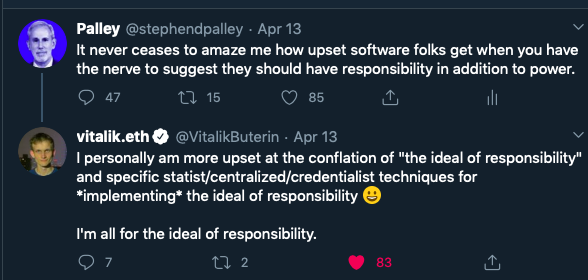

Look, the law is not the answer to technological change, and licensure isn't either. But what we want is responsible innovation, not innovation for its own sake. That requires more than a gesture to the idea of responsibility without any implementation ("the ideal of responsibility").

Technology is and can be a powerful force for good. And I love software development as much as I love poetry and music – I have spent days refactoring code to make things work with as few words as possible, and I am not suggesting that anyone needs a license to do that.

I am suggesting, however, that we need to think about how the law develops and adjusts to this relatively new technology – bearing in mind that licensure can protect not only end-users but also creators by placing the onus of final approval on the person who wields that stamp.

Most will agree that technological change in and of itself isn't a good thing – software can be incredibly useful. If law slows development in order to make sure that reactor cores are less likely to explode, one could just as quickly conclude that it's doing just fine.

These are hardly new arguments, of course. As Emerson points out in his 'Self-Reliance' (1841), just as the industrial revolution was gaining steam:

"...the civilized man has built a coach, but has lost the use of his feet . . . The arts and inventions of each period are only its costume, and do not invigorate men. The harm of the improved machinery may compensate its good. Hudson and Behring accomplished so much in their fishing-boats, as to astonish Parry and Franklin, whose equipment exhausted the resources of science and art. Galileo, with an opera-glass, discovered a more splendid series of celestial phenomena than any one since. Columbus found the New World in an undecked boat. It is curious to see the periodical disuse and perishing of means and machinery, which were introduced with loud laudation a few years or centuries before. The great genius returns to essential man. We reckoned the improvements of the art of war among the triumphs of science, and yet Napoleon conquered Europe by the bivouac, which consisted of falling back on naked valor, and disencumbering it of all aids. The Emperor held it impossible to make a perfect army, says Las Casas, ‘without abolishing our arms, magazines, commissaries, and carriages, until, in imitation of the Roman custom, the soldier should receive his supply of corn, grind it in his hand-mill, and bake his bread himself.'"

Emerson wasn't a Luddite; he was a humanist. But the point is that new technology isn't good, or bad, in and of itself. It's what you make of it, and the impact it has on human lives.

I'd suggest, with the deepest respect and affection to my friends and colleagues who create magic with code, that law and licensure, when understood with nuance, can help them do good – not restrain them.

And even if we do not have an answer today – and perhaps licensure isn't the answer – it seems a fair question to ask how responsibility for the design of critical infrastructure built using software should be allocated, audited and monitored.

That is a question worth asking and a conversation worth having.

© 2023 The Block. All Rights Reserved. This article is provided for informational purposes only. It is not offered or intended to be used as legal, tax, investment, financial, or other advice.